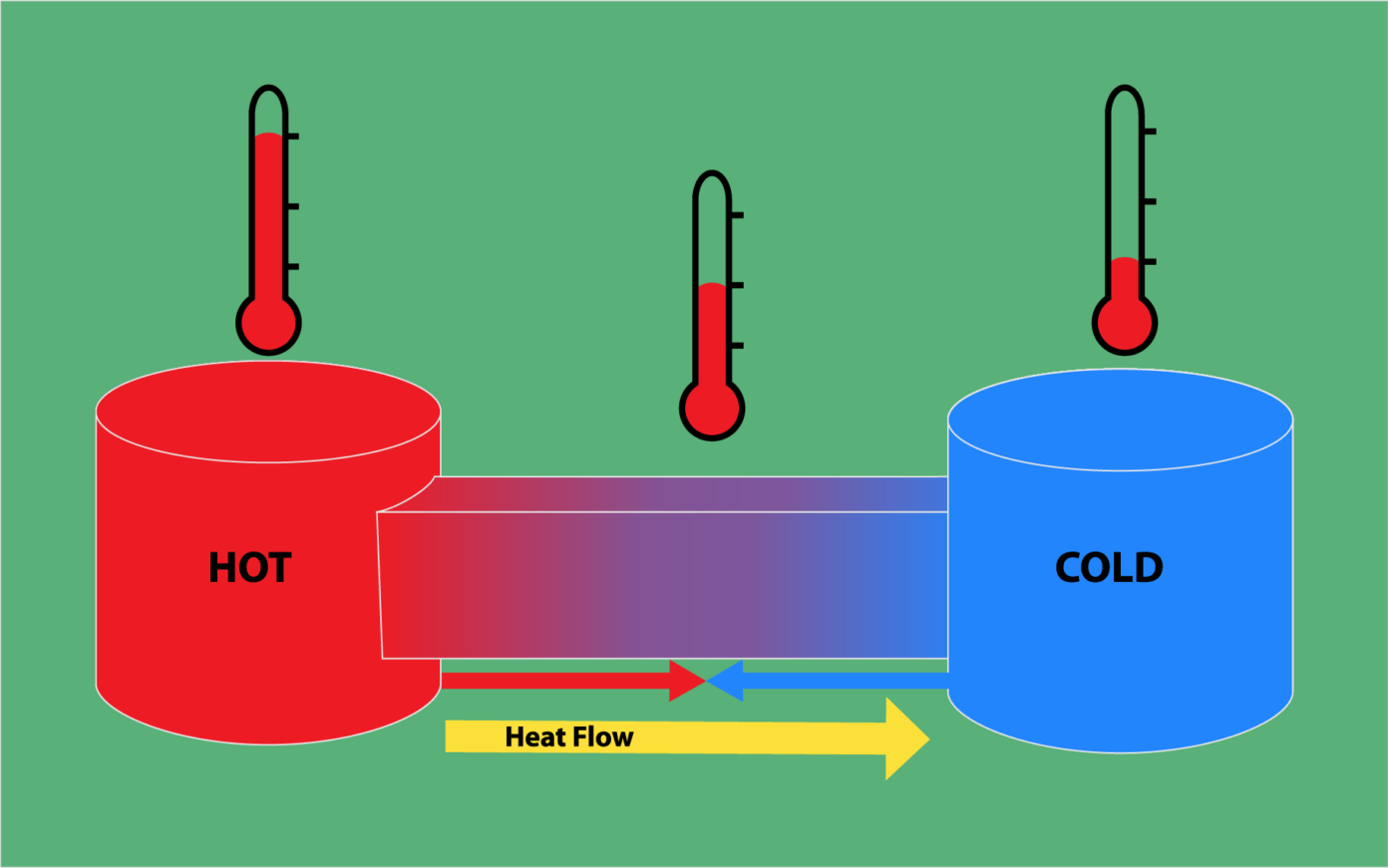

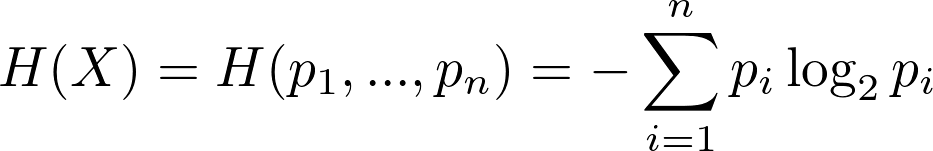

This requires just one bit of code and encodes the same. To say the system has to just say Yes or No to the bank to accept and provide the loan to its potential customer. So, if I have a system that accepts the loans of the potential customer and the system provides outputs such as- will the customer default or not default? Then this information can be given in a one-bit code. This will help the bank make a better decision. If there is a system that provides the information that the potential customer will default (1) or not default (0), then an entropy (degree of uncertainty) can be calculated. Let’s say, if a bank needs to provide a loan to its customer, the bank first needs to protect itself from the risk of uncertainty of payment of loan. But the question is, how much of the information passed is useful and how much of the information gets passed to the receiver? Is there any kind of loss of information? Information can be passed in the form of bits, which is either 0 or 1. As we know, low-level machine language codes and decodes in the form of bits namely 0’’s and 1’s. It is based on how effectively a message can be sent from a sender to the receiver. Before we deep dive into the concept of Entropy, it is important to understand the concept of Information theory that was presented by Claude Shannon in his mathematical paper in the 19th century. Let’s start with the basic understanding.Īlso Read: Understanding Distributions in StatisticsĪs mentioned above, Entropy can be defined as randomness, or in the world of probability as uncertainty or unpredictability. In this article, an effort is made to provide clarity on some concepts of Entropy and cross-entropy. These are just some of the questions, but the list is quite long. What does the value so obtained by cross-entropy signify?.How is this concept applicable to the world of statistics?.When the water is heated, the temperature increases, which increases the entropy. It is also defined as randomness in the system. Since the temperature of the system changes, density, as well as the heat, changes in the system. Disorder does not mean things get into a disordered state. In literal terms, there is a change happening in the system.

Well, what does that mean? There is a disorder in the system. In entropy, the momentum of the molecules is transferred to another molecule, the energy changes from one form to another, entropy increases. The moment we hear the word Entropy, it reminds me of Thermodynamics. LinkedIn Profile: Introduction to Cross Entropy How is Cross Entropy related to Entropy.